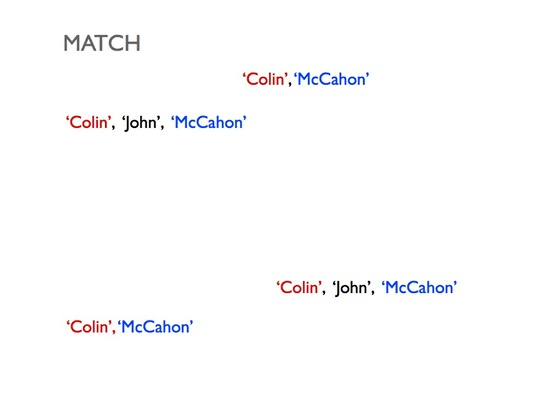

Colin McCahon | McCahon, Colin | McCahon, Colin John, 1919-1987

Posted on 17 December 2013 by Thomasin

On November 26 I presented a talk on linked data and entity reconciliation at the National Digital Forum held at Te Papa in Wellington. These are my speaker's notes.

Linked data is an amazing yet elusive idea. It extends the conventional Web by providing a means to identify and refer to specific entities or concepts and supplies a way to describe how those things are related. Together, the entities, concepts and relationships constitute a set of assertions about how we think the world is... a set of assertions that can be queried, analysed, extended, shared and visualised.

So, for example, our collections might have a person entity "Colin McCahon", a place entity "Timaru" and a subject concept "modern painter". Linked data enables you to encode little triplish statements like “Colin McCahon was born in Timaru” or “Colin McCahon was a modern painter” that can slot into a much larger data network of assertions spanning institutions around the world—a network where you could ask questions like, "Show me all 20th Century painters who were born near Timaru" or "Who were McCahon's contemporaries? And let me see a chronology of their major paintings."

Folk have been talking about variants of linked data, and its close cousin the semantic web, for a couple of decades now. It turns out to be a wee bit tricky to realise.

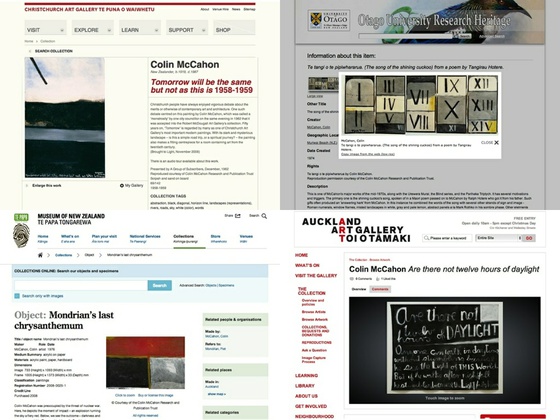

Our respective institutions structure data in different ways. This diversity complicates the act of forging links between entities and items. The National Library of New Zealand, the Alexander Turnbull Library, Te Papa, Auckland Art Gallery, Christchurch Art Gallery, Victoria University, Otago University and a host of other institutions hold various works, photographs and writings relating to the artist Colin McCahon. Although each institution is generally internally consistent in how they describe people, the metadata standards we follow and the data structures we employ differ from one another. Although it is easy for a human to recognise that "Colin McCahon" is the same as "McCahon, Colin" is the same as "McCahon, Colin John, 1919-1987" those subtle distinctions are tricky to teach a computer.

New Zealand may be a small, but we’ve collected and digitised a fair amount of stuff. Truckload after metaphorical truckload of photographs, artworks, manuscripts, maps, articles, documents, audio recordings, films, ephemera and more. It is astonishing when you pause to think about it.

And those things, those millions of works and commentaries and records, they refer to tens of thousands of places, hundreds of thousands of people and countless events, both large and small, in our nation’s history.

The good news is that generations of thoughtful, dedicated cataloguers, curators and researchers have spent years describing this stuff. The tricky bit is that we employ a diverse range of practices, follow different standards, and make use of a multitude of metadata schemas.

Linking our data and metadata together requires us to overcome our differences. And that's what I've been exploring. Before I start geeking out on you, I'm going to note four underlying principles that inform this work and shaped my thinking. I raise them explicitly in order to test them on you. Please let me know if these premises are either incorrect or irrelevant.

1) Our collective interests overlap

We, the nation’s memory institutions and exhibition spaces, we share much in common with one another. There are differences—trust me I’ll get to those in a moment—but there is tremendous overlap in our concerns and interests. Each of our institutions holds only a partial account of Aotearoa’s histories, contemporary states and possible futures. The works and writings and records relating to specific people and places and events are rarely conveniently housed into neat institutional buckets. The reality of our situation is that we are the collective stewards for a towering, glorious, densely connected tangle.

... and yet...

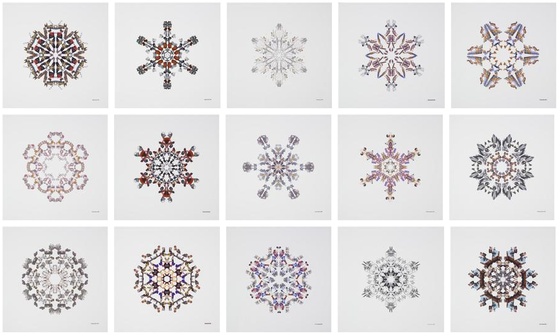

2) We are special snowflakes

Detail from 'Overcast'. Tan, Yuk King (2004) Te Papa Collections Online.

The second principle is that our institutions, collections and practices are incredibly diverse. Just a few of the ways we differ include our descriptive practices, the technical systems we use to manage our data, our metadata formats, where our staff’s expertise lie, our institutional histories, our responsibilities and governance structures, the audiences we serve and the budgets we operate within.

This heterogeneity is inevitable and probably also desirable. But it poses significant challenges to linking data because it takes work for dissimilar collections to be able to talk to one another….which leads to a third principle.

3) Standardising practice is hard and expensive

Five men working in a clock and watch making workshop. Webb, Steffano, 1880?-1967 : Collection of negatives. Ref: 1/1-004198-G. Alexander Turnbull Library.

We do stuff differently. Sometimes we’ve been doing stuff differently for a very long time indeed. We’ve built up particular sets of workflows, standards, expertise and technologies, investing considerable time and money along the way.

I’ve been involved in a few workshops both here and abroad where folk discussed what it might mean to align their practices. I gotta tell you... it sounds really hard. I think these "how is the way we do it, different from what you do it?” conversations are useful and enlightening. But from what I see the metadata singularity is a long way off.

4) The perfect is the enemy of the good

This final premise is a Voltaire quote that my colleague Michael Lascarides introduced me to. It is almost certain that there will be incorrect data and flawed methodology in what I am about to show you. However, I think that we need to start somewhere. So, I am sharing this as both strawman and scaffolding. A strawman because I think we need a first attempt to examine, consider and debate in order to can work out what this should and shouldn't be. Scaffolding because I believe to realise the linked data dream, we may need a temporary structure that provides an initial frame to build things off but that we can safely dispose of when it no longer serves a useful purpose.

The experiment

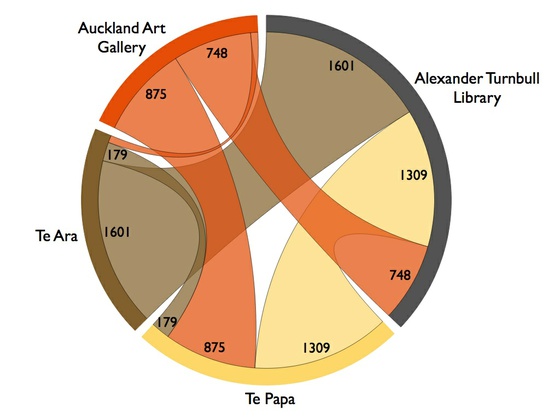

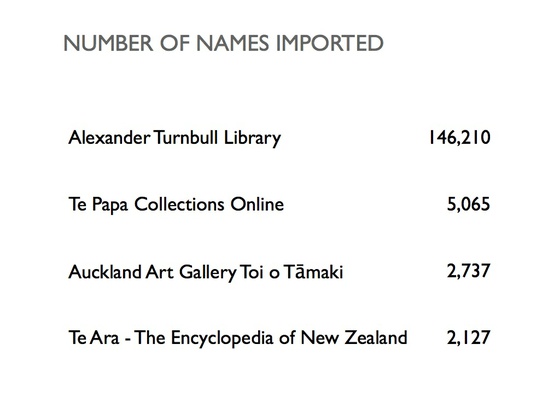

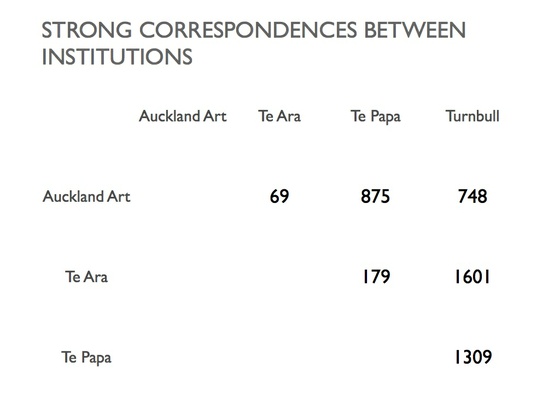

So, here’s the experiment. I approached staff from four quite different institutions and asked if I could have a crack at linking their person authority metadata together. I received 146,000 names from Alexander Turnbull Library—some people, some corporate entities—5,000 people from Te Papa, 2,700 artists from Auckland Art Gallery and 2,100 person entries from Te Ara Encyclopaedia of New Zealand. Let's trace some data through the workflow I set up.

I do not think of this work as creating a master or ultimate authority files. Instead, I think of it being more akin to a telephone exchange or a translation service. When Te Papa talks about this person, it’s the same as when the Turnbull talks about this person. I want a web page and a data call for every person, place and event that we describe. And I want them linked across our institutions. That is my small dream.

The following slides demonstrate the basic workflow I'm using to perform the entity reconciliation. This is all still R&D work and subject to change at any moment.

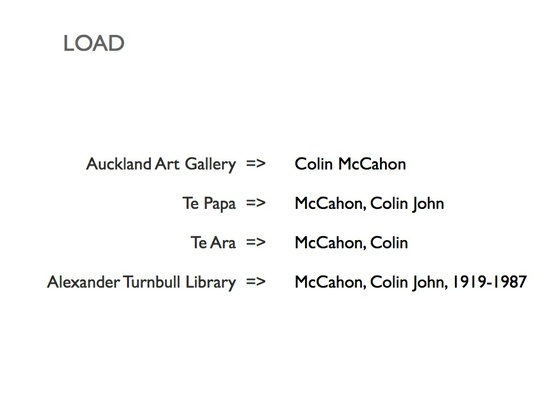

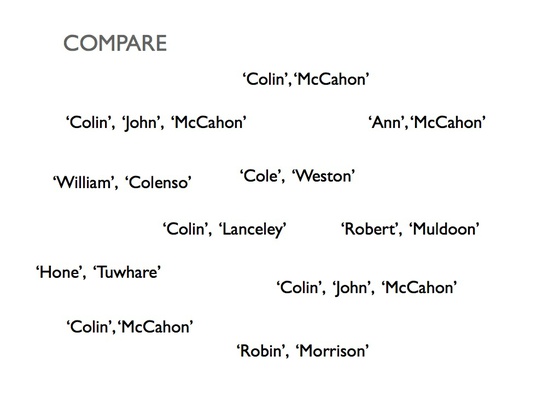

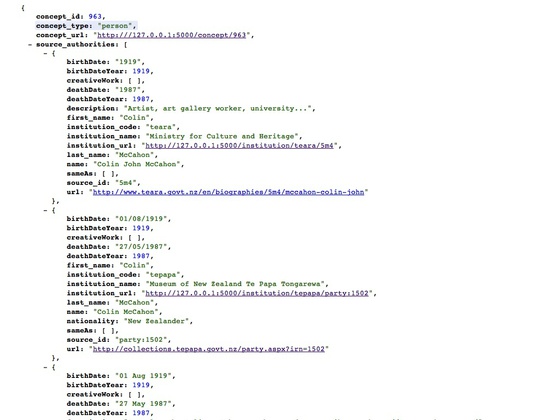

First, I import the people records from each institution, focussing on the name and birth / death data. You can see that each organisation structures its names in slightly different ways.

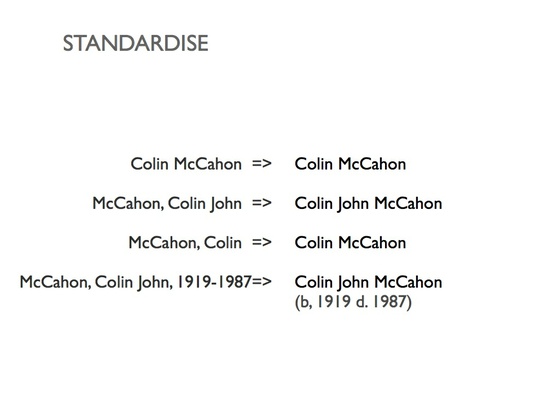

I perform a first pass over each authority set to standardise the name structure. For example, the Te Papa format presents the surname first, separate by a comma from the rest of the string. So, for Te Papa name records, I set up a rule that sends the surname to the back of the string and removes the comma.

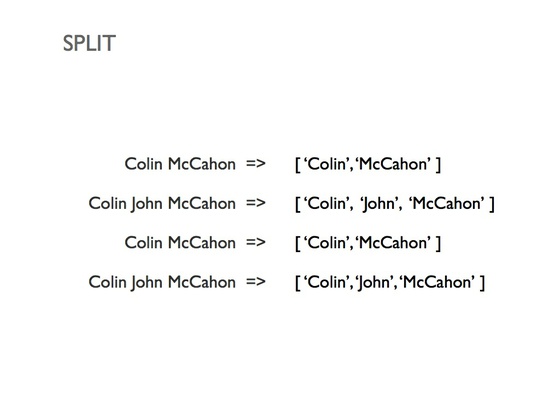

Next I split the names into parts based on the presence of spaces. This supplies a set of constituent tokens for easier comparison.

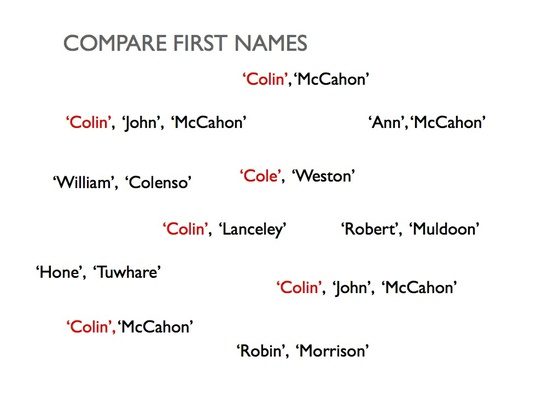

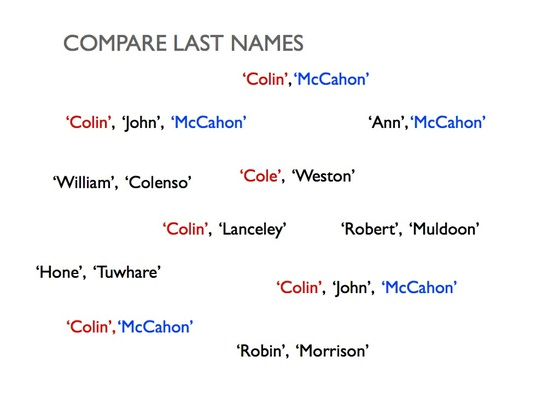

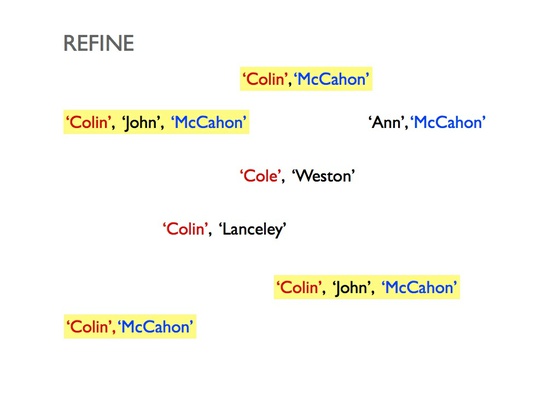

It gets a bit technical here, but the basic gist is that I throw all the names into the air and start comparing them. The more detailed explanation is that I create a hash table where the keys are the unique first names and each value is an array of identifiers for authority records that approximately match that first name. I create a second hash for all the surnames. (Note: I used the get_close_matches function from Python's difflib module to perform the fuzzy string matching.)

The algorithm runs over each unmatched person and finds other people with similar first and last names.

I create shortlist of candidates is created from the intersection of first and last name matches.

Finally, the algorithm compares the beautifully parsed birth and death dates, discarding any people who do not match.

The algorithm is conservative but provides solid results. The following coincidence matrix summarises the number of connections between institutions. So, for example, there are 69 people in the Auckland Art Gallery name authority database who could be matched to people in the Te Ara Encyclopaedia of New Zealand.

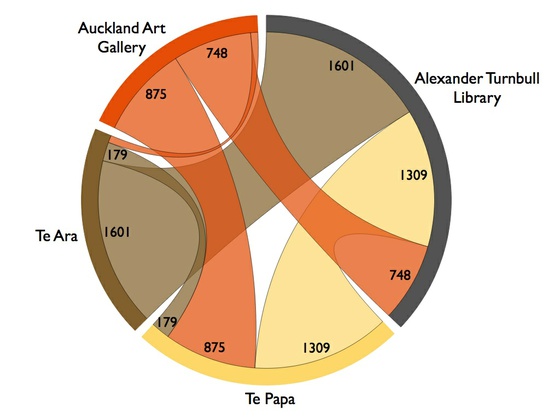

To help me understand the connections I created a chord diagram of connections. This chart shows the number of common people that could be identified between pairs of institutions. It helps me visualise the amount of commonality between institutions in terms of overlapping people. For example, Te Ara has entries about many of the same people that the Turnbull does, but less overlap with Auckland Art Gallery and Te Papa.

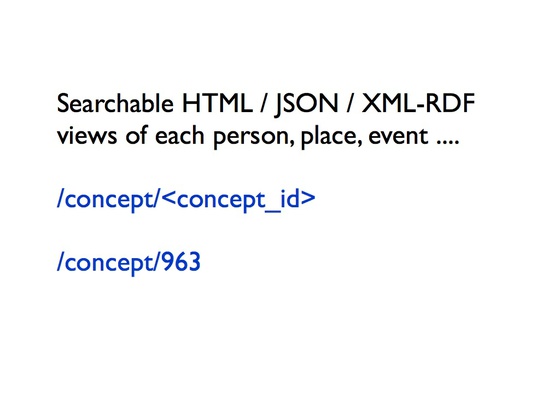

To explore this data I built a prototype API using Python and Flask. There is no search in this simple example, although that would be an important component of any DigitalNZ authority API. The prototype API supports two styles of request. First, you can ask for a particular person by specifying their concept_id—a unique integer assigned to a matched set of concepts.

This currently returns a schema.org(ish) description of all the source authority accounts of that person.

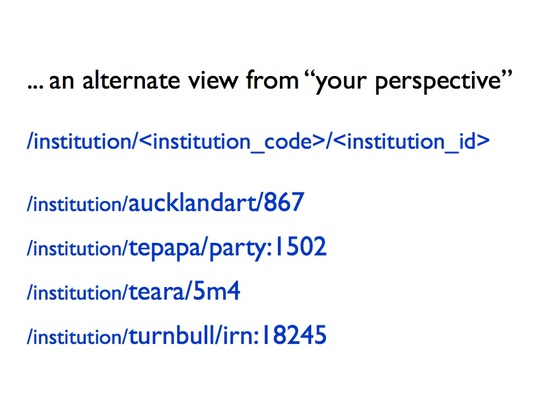

The other style of query provides institutions a means to look up people using their own identifiers. Consider, for example, how each of the four institutions refer to Colin McCahon internally.

Auckland Art Gallery Toi o Tāmaki : 867

Museum of New Zealand Te Papa Tongarewa : party:1502

Te Ara Encyclopedia of New Zealand : 5m4

Alexander Turnbull Library: irn:18245

Given that we harvest these unique identifiers, and our partner institutions are such important API users, it seems useful to provide endpoints that help bridge the various representations. An institution could look up an entity using their own naming scheme and see how all the other institutions describe that entity and the works it is connected to.

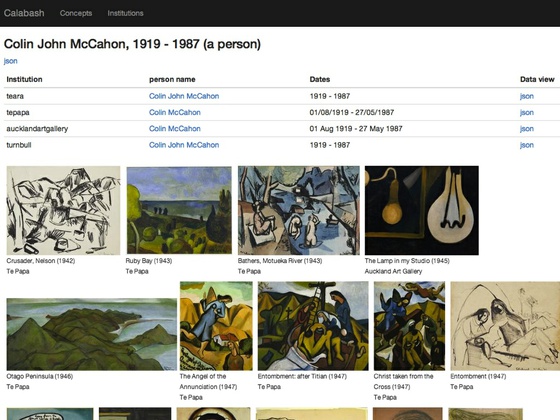

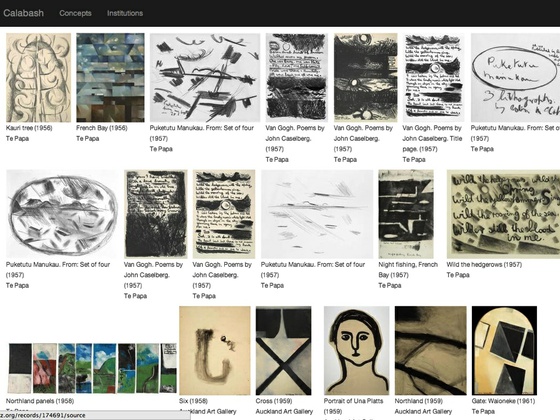

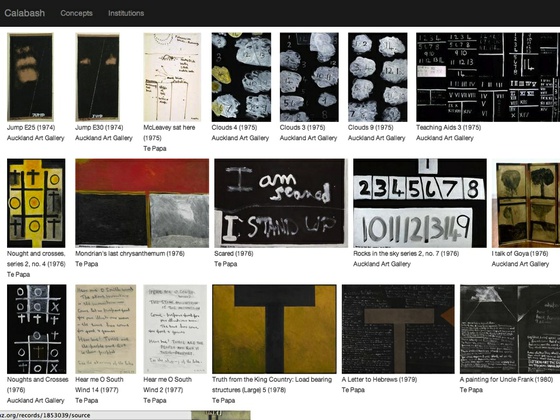

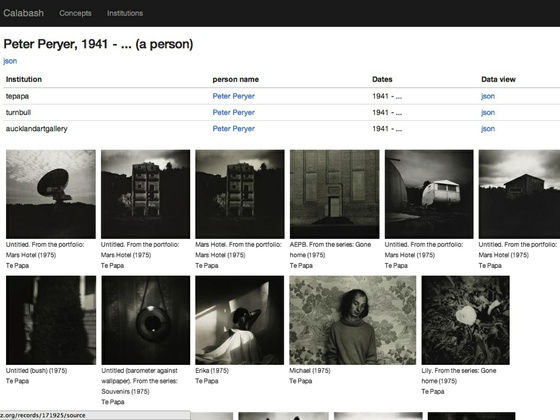

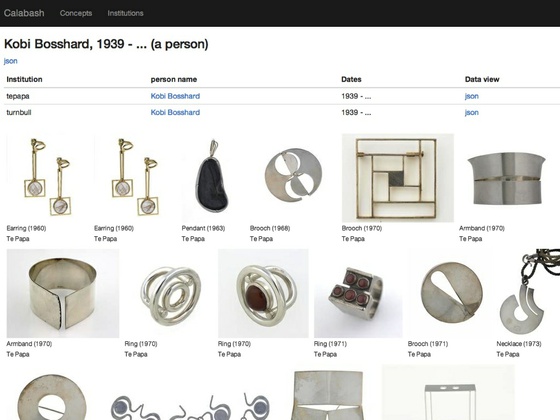

Finally, I built a small demonstration gallery application to explore the data. The idea was to create a website that enables a person to see all of a New Zealand artist's works in chronological order, irrespective of which institution it is associated with. For any given artist, the application searches the DigitalNZ API for works where the person's name is recorded in the dc:creator field. The trick is that the search query is tailored for each institution. So, for example, it will search Auckland Art Gallery for "Colin McCahon" but Te Papa for "McCahon, Colin".

... and of course you can view artists who are not Colin McCahon.

This work is in a tentative research phase but we have learnt a lot about the problem of entity reconciliation across databases through these experiments and we believe that it is feasible. DigitalNZ intend to move this out of an experimental phase and into production over the course of next year, folding place authorities into the mix too. So, my questions for you are, "Is this worthwhile?", "How should we do it?" and "Would this useful?"

Comments

Comments have been closed for this post

Nate Solas, you are a super-star. Thank you!

--Chris McDowall • 2013-12-19 00:00:00 UTC

Just revisiting this interesting exercise. Does this usual DigitalNZ CC-BY license apply to the blog content here? I'd like to use the chord diagram to illustrate a point in something I'm writing. Thanks.

--Tracie Almond • 2013-12-19 00:00:00 UTC

Hi Tracie, yes our blog content is under CC-BY license, so you are more than welcome to reuse the chord diagram from this post. You can find out more information on our Copyright page: http://digitalnz.org.nz/about/copyright. Cheers, Ting

--Ting • 2013-12-19 00:00:00 UTC

We've turned off comments here, but we'd still love to know your thoughts. Visit us on our Facebook Page @digitalnz or on Twitter @DigitalNZ to share any ideas or musings with the DigitalNZ team.