Reflections on the 2013 Linked Open Data in Libraries Archives and Museums Summit

Posted on 24 July 2013 by Chris

Linked data is an amazing yet elusive idea — elusive for at least two reasons. It can be difficult for people who don't live and breathe linked data to grasp. It also remains difficult for institutions to know where to start in providing linked data views of their collections. At DigitalNZ we have been exploring these issues both internally and with some of our content partners. This blog post provides a brief overview of what linked data is and offers some reflections on a recent linked open data summit.

Day two LODLAM session schedule by Jon Voss.

The conventional Web is beautiful in its simplicity—anyone can link any online document to any other online document. Linked data extends the Web by providing a means to identify and refer to specific entities or concepts (e.g. Colin McCahon, Timaru, modern painters) and a graph-based data model for making assertions about how those things are related (e.g. “Colin McCahon was born in Timaru”, “Colin McCahon was a modern painter”). Together the entities, concepts and relationships constitute a set of assertions about how we think the world is. This model can be queried, analysed, extended, shared and visualised. The clever bit is that assertions can be made by anyone and they can connect data across different datasets and institutions (e.g. “this Alexander Turnbull Library photograph depicts a Colin McCahon painting held by the Auckland Art Gallery.”). The hope is that linked data will enable our institutions to publish hugely diverse datasets in a gradual and sustainable way so that we can all share and build on one another's work.

In late June I attended the Linked Open Data in Libraries Archives and Museums (LODLAM) Summit. A dedicated community of visionaries, technical experts and policy makers from all over the world assembled at Montreal's Grande Bibliothèque to share successes, detail case studies, explore concepts, move toward consensus on contested issues and collaborate on code and policy. What follows is a series of reflections on LODLAM in no particular order.

There were many sessions running in parallel, so I only witnessed a fraction of the conversations that took place. These notes should be treated as a personal transect rather than a complete survey of the territory. If you are interested in this topic, I encourage you to read other LODLAM accounts written by Yves Raimond, David Weinberger, Rurik Greenall and a host of participants at the LODLAM website.

The role of aggregators

I ran a session focused on how metadata aggregators (e.g. DigitalNZ, Europeana, DPLA) can participate in building a linked data ecosystem. There seemed to be two main conclusions from the discussion. The first was, given the relative stability of these projects, it would be useful if the various aggregators could provide dependable linked data endpoints of the metadata they collect—even if they publish only basic metadata about cultural heritage objects. The second conclusion was that aggregators are well-positioned to infer and assert linkages between their contributing partners' authority control files. In DigitalNZ's case, for example, this would mean establishing and publishing assertions that the following entities are equivalent:

- Te Papa's ' McCahon, Colin '

- The Auckland Art Gallery's ' Colin McCahon '

- Alexander Turnbull Library's ' McCahon, Colin John, 1919-1987 '

Although there are international projects like the Virtual International Authority File connecting the vocabularies of select national institutions, most cultural heritage organisations are unsure how to get started linking their datasets together. Establishing equivalence mappings between people, place and subject headings may act as a sort of scaffolding to hang other linked data assertions from.

User interfaces are important

Corey Harper ran a lively session on linked data user interfaces. Harper opened the session by proposing that existing linked data applications are poor at presenting search results in their wider context. He suggested that we've relegated people, places and things to a lower status than they should be and questioned how we can fold narrative into our search interfaces. Here is a worthwhile video of the session's opening minutes where Harper sets the scene for the session.

Harper's core question should be at the heart of any design effort to build search and visualisation applications intended for a wider audience: "Irrespective of the underlying data structure, how can we present entities and concepts to people in a way that is useful to them?" When we design user interfaces we should avoid limiting ourselves to mirroring the underlying data structures and instead focus on solving the problems our researchers and public have. As several attendees agreed over drinks at the evening function, there is a good chance that the user interfaces that prove most popular will not be based on force-directed graphs.

David Weinberger published notes on this session.

Flawed but out there is a million times better than perfect but unattainable

Despite not attending the LODLAM summit this year, references to Tim Sherratt's various linked data experiments seemed to pop up in every second session (see, for example, the Small Stories demo from his fantastic talk at the 2012 National Digital Forum). I think there is a simple reason that Tim's shadow loomed so long over the discussions. When Tim makes things he carefully details his thinking and process through talks and blog posts and then he is brave enough to publish his linked data experiments for anyone to work with. The experiments generally have few technical dependencies, ensuring that they are straightforward to explore and extend. Through sharing his work and underlying code early, Tim ensures that we are all able to build on his explorations.

Will your service still be up next Christmas?

This is the flipside to the "flawed but out there" observation. I heard variants of the following many times during the two days of LODLAM.

"We have created this really interesting pilot dataset. It's currently available at this endpoint but it's on a test server, so we aren't sure how long it's going to be up. Oh, and we might change the schema during the next iteration. So, please take a look, but don’t build anything important against it."

That is difficult information to work with. On the one hand, the data is often very interesting and I am keen to work with it. But if I can't be sure whether a service is going to be up next year, next month, or even next week, it is unlikely that I will spend time with it. I recognise that it is unfeasible to commit to standing these data services up indefinitely. Instead what I propose is that folk are clear about the lifespan of their pilots and tests. If you tell me, "this service will be up until Christmas" that is really useful information. An availability timeframe is enough for me to work out whether I should invest some time in making our data work together.

Licensing

Jon Voss ran a session on normalising linked data licenses and published a useful set of session notes on the LODLAM site. The major idea for this stream was that we should collectively aim for the simplest common license we can mutually agree on. Many people in the room advocated for CC0 licensing of linked data to minimise legal and technical impediments. The rationale is that fewer restrictions on a given piece of content, the more likely it is that the content will play well with other datasets. However, CC0 is not a valid option in countries like New Zealand or France where it is not recognised by law. In short, licensing remains important, unresolved and hard.

Standards?

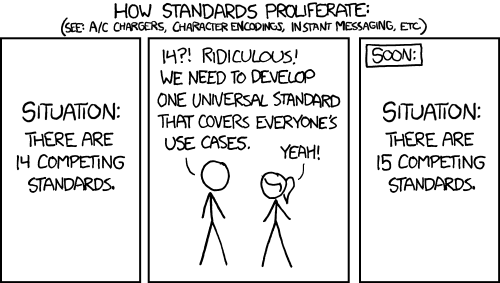

Over coffee, I had several conversations with people who are relatively new to the world of linked data where one of us bashfully confessed that we were overwhelmed by all the choices of how to do things. The following XKCD strip kept popping into my head.

XKCD - Standards.

Remember the graph-based data model I mentioned in the second paragraph? Well, that model is called the Resource Description Framework, usually shortened to RDF. Here is a (partial?) list of the major RDF serialisation formats:

- RDF/XML

- N-Triples

- Notation 3 (N3)

- Turtle (a simplified, RDF-only subset of N3)

- JSON-LD

Several times during the conference attendees challenged others to switch from representing assertions as triples to quads (triples with contexts). There is a bewildering, and I fear unsustainable, number of vocabularies and ontologies.

On some levels I think this diversity is desirable. It is unlikely people will get these things right from the beginning, so it is great that our community can explore all these different ways for representing knowledge. However, I think it also presents significant interoperability challenges and acts as a barrier to entry for new developers. It may also create a climate of tentativeness where institutions wait for the standards to settle down before participating. We should also be conscious that that initiatives like schema.org, a collaboration between Google, Microsoft and Yahoo!, are waiting in the wings with active communities and accessible getting started tutorials.

The legacy of bad inferences

Dave Riordan from NYPL labs ran a fascinating session about the mechanics and ethics of performing knowledge graph analytics on cultural heritage data. Riordan played a training video produced by Palintir, a US data analytics company, and challenged the room to think about what it would be like if we had similar tools for exploring our collections.

One of the most powerful properties of a linked data graph is that novel information can be automatically inferred from the graph from both the information encoded and from the structure of the nodes and edges. With reference to people who are incorrectly assigned to no-fly lists, we debated the questions, "what is the legacy of a bad inference in a linked data graph?" How do they echo through a system and how long do they last for?

We need better tools for matching entities across institutions

Different institutions structure their data in different ways. The data schema and conventions used by Te Papa differ from those used by Alexander Turnbull Library. Aaron Straup Cope from the Smithsonian Cooper-Hewitt Design Museum organised a session to explore how we can match entities, like people and places, across multiple institutional databases. The folk gathered agreed that entity matching and schema cross-walking tools are an area that we need to make progress in. As I noted in the earlier section on the role of aggregators in linked data, I see this as an important future area for DigitalNZ. One of my dreams is to make people and places first-class entities in the DigitalNZ API, so that a metadata request on the "Colin McCahon" person returns the works related to the artist and his relationship to them (e.g. paintings McCahon painted, books about him, photographs that depict him). Future blog posts will detail our explorations in this domain.

Comments

Comments have been closed for this post

As clear an account as I have read for a long time. Glad you are thinking about these things Chris!!

--Sue • 2013-08-15 00:00:00 UTC

Thanks Sue. I am glad that it was useful. It has taken me a long time to get my head around this stuff, it feels good to be able to share these thoughts.

--Chris • 2013-08-15 00:00:00 UTC

We've turned off comments here, but we'd still love to know your thoughts. Visit us on our Facebook Page @digitalnz or on Twitter @DigitalNZ to share any ideas or musings with the DigitalNZ team.